How Humans Learn

Rethinking How AI Systems Are Trained

Last updated February 22, 2026.

Rich Sutton’s The Bitter Lesson taught AI researchers a crucial insight: general methods that scale with computation (through search and learning) ultimately win over approaches that hard-code human knowledge.

Seventy years of AI history, from chess to Go to computer vision, proved this pattern. The lesson is blunt: don’t build in what we know; build in the ability to discover.

A defining example sits at the center of current AI practice. Directly feeding human experience and knowledge into networks through supervised learning (training models to mimic human outputs) is the kind of human-knowledge-dependent approach in the spirit of what The Bitter Lesson cautions against.

Developmental science reveals a complementary insight that extends The Bitter Lesson: don’t try to construct adult intelligence directly, build the sequence through which intelligence emerges:

General learning capabilities must be developed before specialized skills can emerge.

Learning is not purely monotonic improvement. Adult intelligence emerges from a developmental sequence, not from direct construction. Development is a sequence of trade-offs; different stages rely on different mechanisms.

As learning progresses, the brain itself changes its computational substrate.

Evolution spent millions of years solving this problem. We should pay attention.

Part I: Early Learning and Meta-Capabilities

Infant learning is neither random exploration nor pure imitation. It’s biased exploration: curiosity-driven, not task-driven.

Infants observe, explore, and learn from what happens: without any explicit goal beyond the drive to understand. The evidence points to four core mechanisms operating simultaneously:

Statistical Learning. From birth, infants extract statistical regularities from continuous input. In language, they identify which syllables commonly appear together (the classic evidence comes from 8-month-old infants, though the mechanism appears earlier).

In vision, they detect patterns in object movement. This happens automatically, without instruction. Infants compress sensory experience into predictable patterns: building generative models, not memorizing everything.

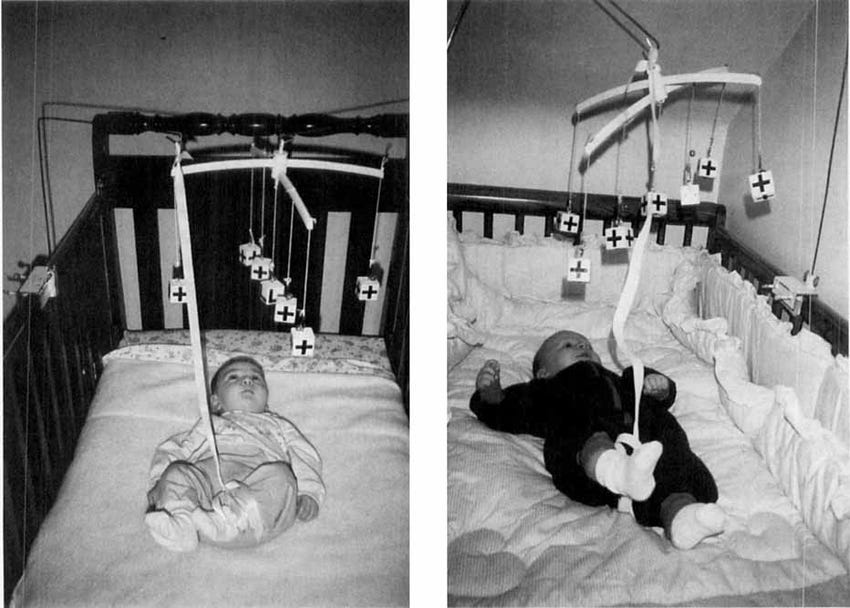

Associative Learning. Infants learn “if I do X, then Y happens” relationships. They actively map the contingencies between their own actions and environmental outcomes. A classic demonstration of this comes from mobile conjugate reinforcement paradigms: when leg movements are coupled to a mobile or sound, infants rapidly increase those movements, and show clear distress when the contingency is removed. This is not mere reflexive responding; infants are tracking what they can control.

This sensitivity to controllability goes further than simple conditioning. Stahl and Feigenson (2015) showed that 11-month-old infants, when presented with objects that violate physical laws, not only explore those objects more, but do so in ways that are specifically tailored to the type of violation observed - a pattern consistent with hypothesis-testing rather than undirected curiosity. In other words, a violated expectation does not just capture attention; it directs exploration toward resolving the specific inconsistency.

Taken together, these findings reveal a coherent picture: from early infancy, babies are not passive recipients of experience. They are building causal models of their environment, probing what they can and cannot influence, and using surprise as a signal for targeted learning.

Active Information Sampling. Infants do not distribute attention randomly. Instead, they selectively orient toward stimuli that sit at the boundary of their current understanding - neither so simple as to be uninformative, nor so complex as to be incomprehensible. This is known as the Goldilocks effect: infants are not drawn to novelty for its own sake, but to experiences that offer the greatest opportunity for learning progress.

This distinction matters. A purely novelty-driven system would attend to anything unfamiliar, indiscriminately. What infants actually do is considerably more sophisticated: they implicitly assess the learnability of each stimulus and allocate attention accordingly, optimizing for information gain rather than mere stimulation. The time-lapse below illustrates this clearly. When a toy offers no challenge, the infant loses interest and moves on; when it is too complex, engagement collapses into frustration. It is in the middle ground, where difficulty meets current capability, that attention is most sustained and learning proceeds fastest.

Social Learning. Here’s where evidence gets contentious. The neonatal imitation debate remains unresolved. Andrew Meltzoff’s classic work suggests newborns can match facial gestures (especially tongue protrusion). But large-scale longitudinal studies haven’t provided convincing evidence - what looks like “imitation” might be arousal responses, oral reflexes, or methodological artifacts. Some specific actions (tongue protrusion) may be more robust, but overall there’s methodological disagreement and inconsistent conclusions.

What’s robust from birth: infants are socially tuned - preferring faces, voices, interactive rhythms. Social learning doesn’t start with copying actions. It begins with joint attention, sharing focus with others, which scaffolds into understanding intentions, then imitation and teaching.

As children grow and language develops, imitation becomes increasingly important.

Infant Goals: Implicit Objective Functions

Do infants have goals?

Yes, but not explicit, verbalized, long-term plans. They’re guided by implicit objective functions:

Maintain comfort and safety (temperature, hunger, pain).

Seek controllable learnable information (neither too predictable nor chaotic).

Establish social connection (preference for human interaction).

For instance, the evidence for controllability learning is particularly strong. Mobile conjugate reinforcement studies show infants not only learn action-outcome contingencies but actively work to maintain or restore control relationships. When the contingency is removed, infants show increased activity and distress: they’re trying to re-establish the predictable relationship between their actions and environmental responses.

These aren’t conscious goals but loss functions that shape behavior.

From the mechanisms discussed above, one meta-goal often appears to dominate: maximize learning progress. This reveals something profound: even without explicit goals, infants are goal-directed in a deeper sense.

Part II: Development as Reconfiguration and Trade-Off

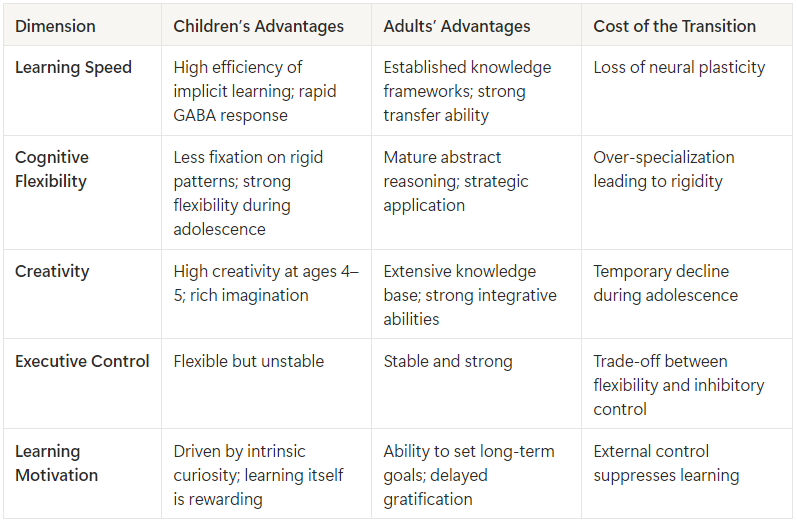

As children develop, they get better at some things while getting worse at others. Maturation isn’t a uniform upgrade: it’s a reconfiguration of capabilities.

This isn’t a bug. It’s the fundamental pattern of human learning.

Development is Not Monotonic Improvement

Development is a series of reconfigurations, each optimizing for different objectives. Infancy optimizes for breadth of learning, discovering what’s learnable. Childhood optimizes for balancing exploration with social integration. Adolescence optimizes for flexibility in changing environments. Adulthood optimizes for reliable expertise and efficient performance.

Think of it like stem cells becoming specialized cells. Early development is all about generalization: building broad meta-capabilities, the raw learning machinery. As the organism grows, capabilities gradually converge and specialize: social skills, language, domain knowledge. The stem cell doesn’t know what it will become. It just maintains the capacity to become anything. Development follows a similar logic: early learning systems preserve optionality, while later systems trade optionality for specialization.

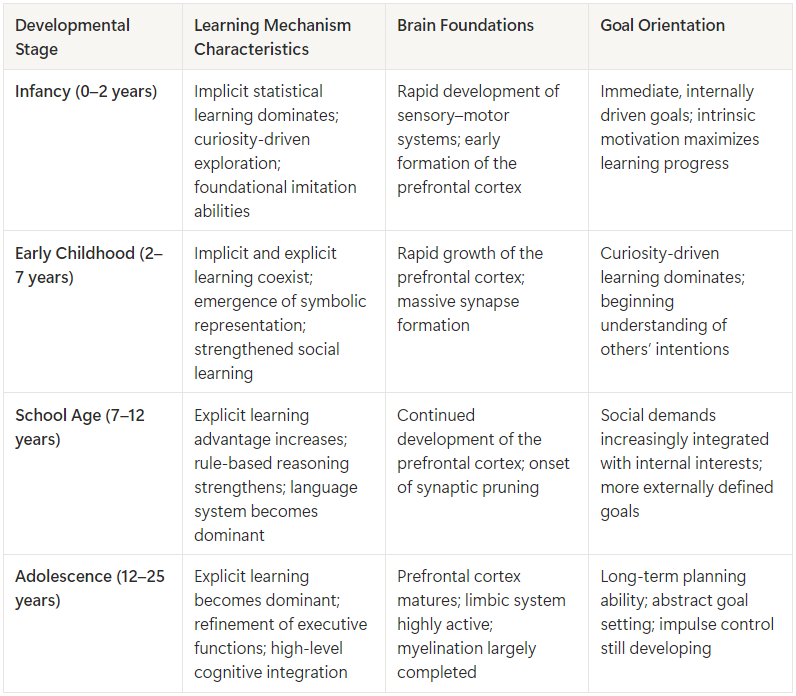

Note: Age ranges and developmental phases should be understood as functional summaries of shifting emphases between coexisting learning systems, rather than as discrete stages.

The Cost of Instruction

Each stage sacrifices something to gain something else. These trade-offs can be summarized across key dimensions of learning and control:

As children develop, gains in explicit, rule-based learning come with losses elsewhere. Children around ages 8–12 often perform worse than younger children on certain implicit learning tasks. The reason is not a lack of ability, but the emergence of explicit rule-following systems that are difficult to disengage. When tasks require integrating information in ways that do not conform to simple, verbalizable rules, older children struggle precisely because they search for explicit rules where none exist. Their verbal and analytical systems interfere with the implicit, holistic learning that younger children perform effortlessly.

This trade-off is visible in everyday behavior. A three-year-old’s apparent “disobedience” may reflect the brain protecting its implicit learning capacity: resisting external constraints that would disrupt ongoing pattern absorption. Conversely, an adult’s rigidity is not a failure of learning, but the cost of stability and expertise acquired through years of rule-guided practice. These behaviors are not personality traits; they are behavioral expressions of underlying learning trade-offs.

The same mechanism explains why instruction can impair learning under certain conditions. When people must follow instructions that conflict with their own intuitions, learning degrades. The cognitive control required to override internal judgments imposes a cost, reducing how effectively the brain updates its internal models. Self-directed exploration in children is therefore powerful, carrying minimal cognitive control costs. Adult learning often feels effortful, as it requires constant negotiation between intuition and instruction. Second language acquisition illustrates this contrast: children often succeed by allowing learning to unfold implicitly, while adults tend to overanalyze and interfere with the process.

Crucially, this trade-off serves a broader function. Instruction is not only about learning efficiency; it is also about social coordination. Explicit rules can be verbalized, shared, and taught. School-age children learn to follow instructions not merely to acquire knowledge, but to adopt norms, align behavior, and coordinate with others. The explicit rule-following system enables cultural transmission and large-scale cooperation. The learning cost is real, but it is the price paid for social integration.

Each stage sacrifices something to gain something else. The costs aren’t bugs - they’re the necessary prices of new capabilities.

The Neural Mechanism

The prefrontal cortex (responsible for executive control, planning, rule-following) develops slowly, with maturation generally continuing into the mid-20s. This creates distinct phases:

Early childhood (2-7): Rapid synapse formation, highly plastic, minimal top-down control. Learning is experiential, exploration-driven, intrinsically motivated.

School age (7-12): Executive functions come online. The child can follow instructions, suppress impulses, apply explicit strategies. But this top-down control creates cognitive control costs - learning under instruction becomes less efficient than self-directed learning for certain tasks.

Adolescence (12-25): Asymmetric development. Limbic system (emotions, rewards, social sensitivity) is highly active while prefrontal cortex still matures. This creates both unique learning flexibility and characteristic instability. Evidence from rodent studies suggests adolescents may show greater flexibility in reversal learning compared to adults - less prone to training-induced rigidity. Whether this translates fully to human adolescent cognition remains under investigation. But they’re temporarily worse at certain creativity types requiring broad knowledge bases.

Recent neuroscience uncovered a mechanism in visual perceptual learning: when children engage in visual learning tasks, GABA (an inhibitory neurotransmitter) rapidly increases in visual cortex and persists for minutes after learning. Functionally, this transient increase in inhibition helps stabilize newly learned representations by suppressing competing neural activity, allowing patterns to consolidate quickly rather than being overwritten. Adults show no GABA change from the same training. In this domain, the finding supports the observation that children can absorb certain skills with an efficiency adults struggle to match. It’s not abstract “neuroplasticity” but a specific neurochemical mechanism.

Part III: Rethinking How AI Systems Are Trained

Sutton’s Bitter Lesson showed that hard-coding human knowledge fails in the long run. Supervised learning on human-generated data (directly feeding human experience into networks and training models to mimic human outputs) is perhaps the most prominent example of this pattern in modern AI. LLMs are built this way: trained to reproduce human knowledge rather than to discover independently.

However, in natural environments, most learning relies on active experience and feedback (trying actions, observing outcomes, and refining behavior) rather than explicit instruction or demonstration.

Human development suggests why this pattern is effective. Early learning is not organized around mastering specific tasks or reproducing existing solutions. Instead, infants first develop meta-learning capacities: sensitivity to statistical regularities, the ability to detect and maintain action–outcome contingencies, and mechanisms for actively sampling informative experiences. These capacities shape how learning proceeds before they determine what is learned.

Only after this general-purpose learning machinery is in place do explicit goals, rules, and instruction become reliable tools for accelerating learning. Instruction can transmit existing knowledge efficiently, but it presupposes a learner that already knows how to learn.

From this perspective, infant-like learning is not an immature form of adult learning, but a phase optimized for acquiring the capacity to acquire skills.

Training Might Need to Be Staged

Adult intelligence doesn’t emerge from direct construction - it emerges from a developmental sequence. Infant meta-learning gives rise to child exploration, which enables adolescent flexibility, which consolidates into adult expertise. Each stage has different computational properties. Each makes different trade-offs.

The infant period shows that general-purpose learning machinery emerges before task-specific skills. Biology builds statistical learning mechanisms, associative learning, active information seeking first. Specific skills come later.

Could AI training benefit from similar staging?

Perhaps we should develop meta-capabilities first, with no explicit task objectives or human constraints. Models might need space to discover patterns through experience before we layer on cultural knowledge and social alignment. The capabilities needed for efficient learning might themselves need to be learned first - not assumed as given.

LLMs and the Role of Imitation

This suggests a possible reframing of supervised learning. We often treat this as foundational training mechanisms - ways to provide priors.

But human development suggests they might serve a different function: alignment and socialization. Mimicry is a fast way to transmit existing knowledge - likely an evolved mechanism for cultural transmission. In another word, mimicry is the distribution mechanism, not the discovery mechanism.

If this framing holds, the sequence of training may matter more than we currently assume. In human development, schooling comes after core learning capacities have already formed, serving primarily to transmit social norms, shared knowledge, and coordination rules rather than optimizing individual learning efficiency.

By analogy, using supervised learning and RLHF after experience-based learning has developed core capabilities may better reflect how humans integrate social knowledge.

Development is Trade-Offs, Not Progress

Human development shows clearly that gaining certain capabilities costs others. Plasticity vs. stability. Implicit learning vs. explicit control. Exploration vs. exploitation. A 3-year-old’s “disobedience” might reflect the brain protecting its implicit learning capacity. An adult’s “rigidity” is the price of hard-won expertise.

Current AI research often optimizes as if all capabilities can increase together. Perhaps they can’t. We keep trying to build one model with all properties simultaneously: learn rapidly, maintain stable performance, explore broadly, execute reliably, learn implicitly, follow explicit instructions.

Human development reveals these properties exist in tension. Biology doesn’t build one brain with all properties at once - it builds a brain that changes its computational profile across development. Perhaps we need different model types for different purposes, different trade-offs made explicit, rather than one model trying to be everything.

Goal-Directedness Exists on a Spectrum

Sutton’s RL framework suggests intelligence emerges from: (1) having a goal, (2) learning from experience through prediction and trial-and-error, (3) leveraging computation.

The core loop: perception → action → reward. Learning happens through interaction with reality, not through mimicking descriptions of it.

Infants are “goal-directed” (maximizing learning progress) without explicit goals. Adults’ explicit goals can interfere with implicit learning.

Rather than always training toward explicit reward functions, consider: implicit objectives (information-theoretic measures), emergent objectives (goals arising from simpler drives), hierarchical objectives (meta-goal of “become better at learning” shaping task-goals).

The infant pattern suggests “maximize your ability to learn” might be more robust as a long-term objective than “maximize performance on specific tasks.”

Hardware Should Co-Evolve with Capability

Brain structure changes as capabilities evolve.

Synaptic density, myelination, regional specialization, neurochemical profiles - all shift across development. The core Transformer architecture remains largely unchanged from pre-training to deployment.

One could imagine architectures that evolve alongside capability: for instance, highly distributed, over-parameterized models for early learning (optimized for exploration), selective pruning and compression for consolidation, sparse specialized architectures for deployment. Whether this improves performance is unclear, but the biological precedent is suggestive.

The human developmental sequence solved certain problems over millions of years. Whether those same principles transfer to artificial systems remains an empirical question. But the convergence of Sutton’s insight and developmental science points in a consistent direction: don’t hard-code what we know, and don’t shortcut the process through which learning capabilities emerge.